Understanding Circuits in Large Language Models

A circuit is like a small set of connections inside the model that helps it do a specific job, such as picking the right person in a sentence or continuing a number sequence.

Modern transformers and large language models (LLMs) are powerful, but they work like black boxes, it’s hard to see how they arrive at an answer. Mechanistic interpretability is a field that tries to open these boxes and understand the steps models take to produce outputs.

The key insight? Models contain circuits, small pathways of connections that perform specific tasks, like identifying who receives an action in a sentence or continuing a number pattern. In this newsletter, we’ll explore what circuits are, why they matter, how researchers find them, and what we’ve learned from studying real examples.

What Is a Circuit?

Let’s start with an intuitive example. Imagine a sentence:

“When John and Mary went to the store, John gave a toy to ___.”

The model needs to figure out that Mary receives the toy.

Inside the network, certain groups of connections work together: some track where the names appear, others identify the verb, and together they point to the correct recipient. This coordinated group of connections is a circuit, a pathway through the model that solves this specific task (see also our article “Alice Gave a Book to Bob.”).

The Technical View

A circuit is a directed sub-graph made up of features (specific activation patterns) that interact through the model’s architecture (Elhage et al. 2021). In transformers, attention heads and multi-layer perceptron (MLP) layers read from and write to a central highway called the residual stream. The sum of all their contributions determines what token comes next.

Transformers of different depths can implement different algorithms:

Zero-layer models act like bigram models, predicting based only on the previous token (Olsson et al. 2022a)

One-layer models can look further back, creating skip-trigram patterns (Olsson et al. 2022a)

Two-layer models can implement induction heads, which copy tokens that appeared after similar patterns earlier in the text (Olsson et al. 2022b)

Understanding these building blocks helps researchers trace how more complex behaviors emerge.

The Challenge of Polysemanticity

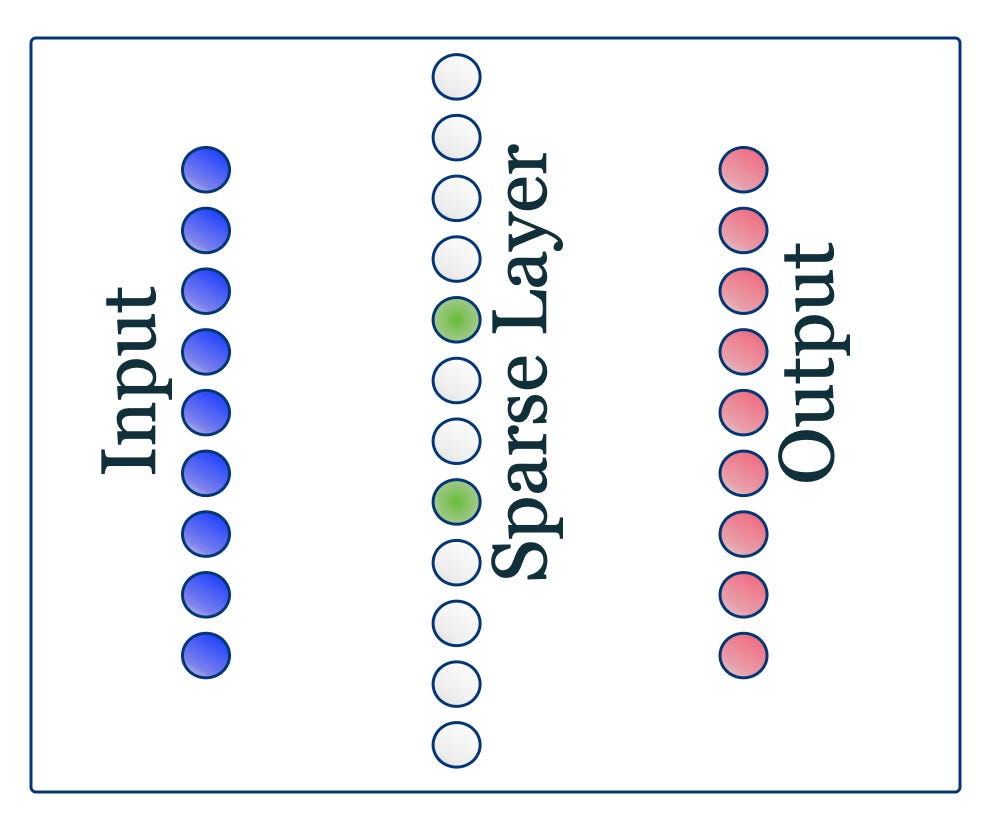

Before we can study circuits, we need to identify clean building blocks, but there’s a problem. Individual neurons in LLMs often respond to many unrelated concepts simultaneously. A single neuron might activate for “code,” “math,” and “the Golden Gate Bridge.” This phenomenon is called polysemanticity (Elhage et al. 2022).

Why does this happen? Research on Toy Models of Superposition showed that networks compress multiple concepts into the same neurons when they don’t have enough neurons to represent everything separately (Elhage et al. 2022). This makes circuits nearly impossible to trace at the neuron level.

One solution: Researchers use sparse autoencoders. These are auxiliary models trained to compress and reconstruct the original model’s internal activations through a narrow bottleneck layer. The bottleneck contains features, units that activate only for specific, interpretable concepts (like “mentions of code” or “legal terminology”). Anthropic demonstrated that sparse autoencoders can extract meaningful features even from large models such as Claude 3 Sonnet (Templeton et al. 2024a).

Related techniques.

Transcoders approximate an MLP with a wider, sparsely activating layer to linearize interactions and enable weights-based circuit analysis through MLP blocks (Dunefsky, Chlenski & Nanda 2024). Crosscoders learn cross-layer mappings and are particularly useful for model diffing and tracking how features move across layers or emerge after fine-tuning (Lindsey et al. 2024; follow-ups on group crosscoders in late 2024). Once we have these clean features, we can trace how they connect to form circuits.

How Researchers Discover Circuits

Circuit Discovery Methods

Circuit Tracing

Anthropic developed Circuit Tracing, a systematic technique for mapping feature interactions (Marks et al. 2025). It works like this:

Replace components: Swap each MLP block with a trained or parameterized cross-layer transcoder, making feature interactions more linear and traceable.

Build an attribution graph: Feed in a prompt and construct a graph where nodes are active features and token embeddings, and edges show how much each feature influences downstream features.

Prune and validate: Remove less important paths and test the circuit by making small input changes to verify which features truly matter.

The result is a human-readable causal graph of which features contribute to the model’s answer (Marks et al. 2025).

Reverse Engineering with Toy Models

Sometimes it’s easier to understand circuits in simplified transformers with just one or two layers:

Zero-layer models implement simple bigram patterns (next word depends only on current word) (Olsson et al. 2022a)

One-layer models can create skip-trigram patterns (looking back to distant tokens) (Olsson et al. 2022a)

Two-layer models can implement induction heads (copying tokens based on pattern matching) (Olsson et al. 2022b)

By analyzing these minimal models, researchers build intuition that transfers to understanding larger, more complex transformers.

Case Studies

Case Study 1: Indirect Object Identification (IOI)

One of the most thoroughly studied circuits handles the Indirect Object Identification task. Given “When John and Mary went to the store, John gave a toy to ___,” the model must predict “Mary.”

Researchers discovered that GPT-2 small uses a circuit composed of 26 attention heads organized into seven functional groups (Wang et al. 2023). These heads work together to:

Track the positions of both names

Identify the verb and its structure

Determine which name is repeated (John)

Point to the other name (Mary) as the answer

This circuit analysis met strict standards for being both faithful (accurately describing what the model does) and complete (accounting for the model’s full behavior) (Wang et al. 2023).

However, the researchers also identified gaps in our understanding, highlighting areas for future work.

Does the circuit generalize? Follow-up studies tested whether this circuit handles variations in sentence structure. The findings showed that the model reuses the same core components but sometimes adds extra connections to handle new formats, demonstrating both robustness and adaptability (Hanna et al. 2023).

Case Study 2: Shared Circuits for Sequence Continuation

Another fascinating discovery involves pattern continuation. Given “1 2 3”, the model should predict “4”.

Lan et al. (2024) found that both GPT-2 small and Llama-2-7B use the same small circuit to recognize patterns across multiple domains:

Digit sequences: “1 2 3” → “4”

Number words: “one two three” → “four”

Months: “Jan Feb Mar” → “Apr”

The circuit uses shared sub-graphs to detect sequential patterns and predict the next item, regardless of the surface format (Lan et al. 2024).

Surprisingly, this same circuit also influences how the model solves simple math word problems.

Why this matters: Discovering that multiple tasks share circuits helps researchers avoid accidentally breaking one capability while trying to improve another. It also reveals how efficiently models reuse internal structures.

Case Study 3: Greater-Than Comparison Circuit

A particularly elegant example comes from research on how models perform numerical reasoning. When given a prompt like “The war lasted from the year 1732 to the year 17”, GPT-2 small needs to predict valid two-digit completions for the end year—specifically, years greater than 32 (Hanna et al., 2023).

Researchers studying GPT-2 small discovered a surprisingly compact circuit that implements this numerical comparison task (Hanna et al., 2023). Related ablation analysis by Nanda et al. (2023) has further illuminated how such circuits can be isolated and understood through mechanistic interpretability techniques.

The circuit operates through a clear sequence of steps:

Feature extraction: Early attention heads identify and isolate the numerical values being compared, extracting them from the token representations.

Magnitude encoding: MLP layers in the middle of the network encode these numbers according to their magnitude, creating internal representations that capture numerical relationships (Hanna et al., 2023).

Comparison operation: Later layers perform the actual comparison by computing relationships between the magnitude representations, essentially implementing a “greater than” function.

Output mapping: Final multi-layer perceptrons boost the probability of end years that are greater than the start year (Hanna et al., 2023).

What makes this circuit particularly interesting is its compositional structure. The circuit doesn’t just memorize comparisons, it learns genuine algorithmic components that can be recombined.

When researchers used path patching and ablation techniques to probe specific parts of the circuit (Hanna et al., 2023), they identified which components were critical for the greater-than operation. Disrupting key computational nodes degraded the model’s ability to perform the comparison, revealing how the circuit’s modular components each contribute to the overall numerical reasoning capability.

Generalization Insights

The circuit demonstrates robust generalization to related numerical reasoning tasks beyond its training distribution (Hanna et al., 2023). The research shows that the circuit activates not just for the specific year-completion format but for other contexts requiring greater-than comparisons, suggesting genuine algorithmic learning rather than narrow pattern matching.

The greater-than circuit exemplifies how even seemingly simple logical operations emerge from the interaction of specialized components, each performing a well-defined sub-task that contributes to the overall computation.

Modular Circuits: Toward Global Interpretability

Most circuit research focuses on one task at a time, which is slow and expensive. A promising direction called ModCirc (Modular Circuits) proposes building a vocabulary of reusable circuit components (He et al. 2025).

The idea is to identify and catalog small sub-graphs with known functions, like LEGO blocks for model behavior. When analyzing a new task, researchers could check which blocks are active rather than starting from scratch.

This could make interpretability analysis much more scalable and practical (He et al. 2025).

Why Circuits Matter

Understanding circuits has implications across multiple dimensions:

Interpretability and Safety

Circuits let us explain model decisions, detect potential deception or misalignment, and build AI systems that behave as intended (Elhage et al. 2021; Marks et al. 2025). Knowing how a model reaches conclusions is crucial for deploying AI safely.

Understanding Generalization

Circuit analyses reveal how LLMs generalize across different prompts and tasks. The adaptive behavior seen in IOI circuits shows that models can flexibly reuse components even in situations where the original algorithm shouldn’t apply (Hanna et al. 2023).

Reusability and Efficiency

Shared and modular circuits demonstrate that LLMs efficiently reuse the same substructures for related tasks (Lan et al. 2024; He et al. 2025). A vocabulary of modular circuits could dramatically reduce the cost of future interpretability work.

Scalable Analysis Tools

Sparse autoencoders, transcoders, and circuit tracing make it possible to extract interpretable features from large models and translate them into human-readable causal graphs, essential for understanding frontier AI systems (Templeton et al. 2024a; Dunefsky et al. 2024; Marks et al. 2025; Lindsey et al. 2024).

Theoretical Insights

The mathematical framework shows that transformer behavior emerges from compositions of simple algorithms: bigram models, skip-trigrams, and induction heads (Olsson et al. 2022a; Olsson et al. 2022b). Research on superposition explains why polysemanticity occurs and why we need sparse representations to help to overcome it (Elhage et al. 2022).

Looking Ahead

Mechanistic interpretability is advancing rapidly. These next steps reflect current community goals rather than finalized results.

Researchers are beginning to scale sparse feature extraction to larger models, trace circuits in frontier systems such as Claude 3.5 Haiku (Templeton et al. 2024b; 2025 updates), and develop interactive tools for exploring attribution graphs.

Key future directions:

Better feature extraction: Training larger sparse autoencoders and cross-layer transcoders with fewer inactive units to capture more concepts more reliably (Templeton et al. 2024a; Dunefsky et al. 2024)

Global circuit vocabularies: Developing and validating modular circuit libraries that enable cross-task reuse and faster analysis (He et al. 2025)

Dynamic circuits: Understanding how circuits change during fine-tuning, instruction-tuning, or across different contexts

Accessible tooling: Building user-friendly platforms (like TransformerLens (Nanda 2022) and interactive circuit notebooks) that democratize circuit analysis and integrate interpretability into standard model development workflows

Ethical considerations: Ensuring interpretability work respects privacy, doesn’t inadvertently reveal sensitive training data, and aligns with responsible AI development principles.

Conclusion

As we peer deeper inside the black box, circuits provide a roadmap for making LLMs more transparent, predictable, and controllable. Understanding and cataloguing these circuits will be crucial for building the next generation of safe and reliable AI systems.

The journey from mysterious neural networks to interpretable, trustworthy AI is well underway, and circuits are lighting the path forward.

Check Out Our Podcast

Glossary

Ablation: The experimental removal of circuit components to test their necessity.

Attention heads: Components that decide which tokens to focus on and copy information between positions.

Attribution graph: A map showing how features influence each other to produce outputs.

Features: Interpretable units extracted by sparse autoencoders that activate for specific concepts.

MLP layers: Multi-layer perceptron blocks that transform representations.

Residual stream: The central highway in transformers where information flows and accumulates.

References

Dunefsky, J., Chlenski, P., & Nanda, N. (2024). “Transcoders Find Interpretable LLM Feature Circuits.” NeurIPS 2024.

Elhage, N., et al. (2021). “A Mathematical Framework for Transformer Circuits.”

Elhage, N., et al. (2022). “Toy Models of Superposition.”

Hanna, M., Liu, O., & Variengien, A. (2023). “How Does GPT-2 Compute Greater-Than? Interpreting Mathematical Abilities in a Pre-Trained Language Model.”

He, Y., et al. (2025). “Towards Global-level Mechanistic Interpretability: A Perspective of Modular Circuits of Large Language Models.” PMLR v267.

Lan, M., et al. (2024). “Analyzing Shared Circuits in Large Language Models.” EMNLP 2024.

Lindsey, J., Templeton, A., Marcus, J., Conerly, T., Batson, J., & Olah, C. (2024). “Sparse Crosscoders for Cross-Layer Features and Model Diffing.” Transformer Circuits Thread.

Marks, S., Rager, C., Michaud, E. J., Belinkov, Y., Bau, D., & Mueller, A. (2025). “Sparse Feature Circuits: Discovering and Editing Interpretable Causal Graphs in Language Models.” ICLR 2025.

Nanda, N. (2022). “TransformerLens: A Library for Mechanistic Interpretability.”

Nanda, N., et al. (2023). “Progress Measures for Grokking via Mechanistic Interpretability.”

Olsson, C., et al. (2022a). “In-Context Learning and Induction Heads.”

Olsson, C., et al. (2022b). “Induction Heads Illustrated.”

Templeton, A., et al. (2024a). “Scaling Monosemanticity: Extracting Interpretable Features from Claude 3 Sonnet.”

Templeton, A., et al. (2024b). “Mapping the Mind of a Large Language Model.”

Wang, K., Variengien, A., Conmy, A., Shlegeris, B., & Steinhardt, J. (2023). “Interpretability in the Wild: A Circuit for Indirect Object Identification in GPT-2 Small.” ICLR 2023.