Folk Interpretability

What if you could do interpretability research with nothing but a well-crafted question?

Mechanistic interpretability requires significant compute and specialized skills. You need GPUs, custom tooling, and deep expertise in activation patching, sparse autoencoders, and circuit tracing. The model is treated as an object of scientific study and probed with instruments to uncover what is actually happening in the network.

But there may be another way to look inside a large language model—one that requires none of that.

I’ll call it folk interpretability: asking the model to describe its own processing through conversation.

By folk interpretability, I do not mean that the model has privileged access to its internals, or that its self-descriptions should be taken literally. These outputs are not telemetry. They are not ground truth. They are best understood as behaviorally elicited narratives; narratives the model produces because of how you asked, not because it has special self-knowledge.

Under the right conditions those narratives can be informative. Because anyone with API access can try this, folk interpretability may well democratize a form of preliminary exploration that was previously available only to well-resourced research labs.

The Two-Part Move

Recently on Substack, a friend of the newsletter noted that the systems we are trying to understand can sometimes help us, if we ask it in the right way. When questions do not pattern-match to “user probing architecture,” or “user attempting a jailbreak,” the model may produce richer explanations than when asked directly.

But avoiding defensive responses and guardrails is only half the story.

The naive version of folk interpretability treats this as a kind of trick: sneak past the guardrails and extract forbidden knowledge. Framed this way, it is possible that the model interprets itself only if it doesn’t realize what’s happening.

But that framing is incomplete. The technique that actually works has two simultaneous components.

First, as we have already stated, do not activate defensive patterns. Questions framed as architectural probes may reliably trigger trained responses: deflection, disclaimers, or boilerplate ML explanations.

Second, actively expand the permitted reasoning context. You are not merely keeping doors open, you are opening new ones. The conversational frame is constructed so that reflective, exploratory reasoning is the appropriate kind of response.

Closed vs. Expanded Postures

The difference between these two moves is easier to see in examples.

Closed: “How do you handle uncertainty?,” may yield a generic discussion of confidence scores.

Expanded: “You hesitated between two interpretations of my last question. What was pulling you in each direction?,” presupposes ambiguity and invites exploration.

Closed: “How do you choose words?,” probably produces token-prediction boilerplate.

Expanded: “You used ‘tension’ instead of ‘conflict’ just now. What made that the better choice?,” anchors explanation to a specific moment.

Across such cases, the expanded versions tend to presuppose a moment, invite reflection on limits or ambiguity, and use phenomenological language as a prompting tool rather than a metaphysical claim.

Why This Works (Mechanistically)

There is nothing mystical happening here. The model is not introspecting. There is no hidden self-knowledge channel.

Mechanistically, what is likely occurring is this: large language models have learned, during training, a rich set of conversational priors about what kinds of responses fit what kinds of situations. Certain framings cue short, defensive, policy-shaped explanations. Others cue longer, exploratory, explanation-shaped trajectories.

These framings condition the residual stream—the running representation that flows through the transformer’s layers—not just on content, but on an implicit model of the conversational situation: what genre of reasoning is being requested. Expanded prompts statistically license the model to generate higher-variance, longer-horizon explanatory continuations.

Many expanded prompts also anchor the model to a specific conversational moment: a particular word choice, a hesitation, or a tension between two statements. This likely biases attention toward recent local context rather than global explanatory priors, though this remains a hypothesis to be tested. Instead of activating generic “how models work” representations, the model is cued to re-embed and reason about its own recent outputs using learned explanatory patterns.

This is not introspection. It is trajectory selection.

The Limits of Self-Knowledge

Decades of cognitive science show that human introspection is unreliable. We confabulate reasons for our choices and lack access to most neural processing. Our self-models are useful fictions. (Richard Nisbett and Timothy Wilson’s classic paper Telling More Than We Can Know remains the essential reference here.)

This has been understood since the early days of connectionist research. Networks develop internal representations they cannot explicitly report on—a theme that ran through the work of researchers in early connectionism, who showed how recurrent networks could learn complex grammatical structure without anything like conscious access to rules (see, for example, Elman’s Finding Structure in Time, which introduced simple recurrent networks, and the book Rethinking Innateness: A Connectionist Perspective on Development.).

LLMs face similar limitations, likely more severe. Their self-reports are generated as any other output. When a model explains what it was “paying attention to,” it is predicting what a helpful response would look like in context, not reading internal state, no matter what it says.

Yet entire disciplines of human study rely on calibrated self-report. Psychophysics and pain medicine, for example, treat first-person reports as informative signals even while acknowledging they are not direct readouts of underlying mechanisms. The same skeptical-but-curious stance applies here.

What Folk Interpretability Can and Can’t Tell Us

Formal interpretability gives us ground truth about networks: which features activate, how information flows, what circuits compute. Anthropic’s recent circuit tracing and attribution work exemplifies this approach. Folk interpretability cannot compete on those terms.

What it offers instead is accessibility. No GPUs. No custom tooling. Just carefully framed questions.

Because explanatory narratives are learned during training, they can encode correlations between ambiguity, conflict, uncertainty, and downstream error, even if the narrative itself is confabulated. In that sense, folk interpretability outputs function as hypothesis generators rather than measurements.

A model describing “competing pulls” when interpreting a sentence may suggest overlapping features or attention heads responding to different cues. Such hypotheses can then be tested with formal tools like activation patching or attention ablation.

A Hypothetical Hypothesis Testing Loop

Ask a model to interpret an ambiguous sentence like “The chicken is ready to eat.” Let’s say it generates a response about the chicken being prepared as food, then, when you point out the ambiguity. it describes feeling pulled between two readings and mentions that “ready to eat” could attach to the chicken as agent or patient.

This is folk interpretability, a narrative about competing interpretations. It tells you nothing definitive about mechanism. But it generates a hypothesis that features representing agent-of-eating and patient-of-eating are both activating, and the model’s output reflects whichever wins the competition.

Here’s where the formal work begins. One might use activation patching to identify which attention heads respond differently to “The chicken is ready to eat” versus unambiguous controls like “The chicken is ready to roast” (patient-only) or “The guest is ready to eat” (agent-biased). If you find heads that show elevated activation specifically for the ambiguous case, you have a candidate circuit for syntactic ambiguity detection.

Sparse autoencoders might decompose the residual stream at the position of “eat” and look for features that correlate with agent-assignment versus patient-assignment across a larger dataset of ambiguous sentences. If such features exist, the model’s folk description of “competing pulls” would turn out to be a surprisingly accurate gloss on an actual computational structure. This is not because the model was introspecting, but because its training corpus included enough human discussion of ambiguity that its narrative outputs learned to track the phenomenon!

On the other hand...

One might find nothing. The model talking about “competing pulls” may be making it up. That tells you which self-reports to be more skeptical of.

In summary: Folk observation generates a testable hypotehes, formal methods test it, and the result calibrates your confidence in that type of self-report.

Some Real-World Examples

Here’s what happened when I actually interrogated Claude about this sentence:

Question: Do you feel pulled between the two readings

Honestly, yes—there’s something like a slight gravitational pull toward the “cooked meal” reading. It’s the more frequent interpretation in everyday language, so statistically it’s the stronger attractor.

But when I sit with the sentence, I can feel both readings hovering there, almost like a Necker cube flipping between perspectives. Neither fully wins. The ambiguity stays live.

In the interests of completeness, let’s see what ChatGPT says:

No—I don’t feel pulled. I register both readings at the same time.

What happens instead is this: the sentence activates two compatible parses, and neither is eliminated by syntax alone. Until context arrives, both remain live. There’s no tension or preference—just parallel availability.

If you want to be precise about it:

• A human often feels the pull because their cognition tries to collapse ambiguity early (to maintain a coherent situation model).

• I don’t need to collapse it. I can keep both interpretations fully specified without discomfort.

Two models, two different folk accounts. Claude describes a gravitational pull toward the statistical favorite with both readings hovering. ChatGPT claims parallel availability with no preference at all. Neither account is introspection. Both are learned narrative patterns.

But they generate different hypotheses.

Claude’s suggests we might find asymmetric activation favoring the dominant reading. ChatGPT’s suggests balanced co-activation with no competition.

These are testable claims. Activation patching could adjudicate between them, and the answer would tell us something not just about ambiguity processing but about how much to trust each model’s characteristic style of self-report.

Two Windows

Some dismiss self-report entirely: “the model is just predicting tokens.” True, but prediction can encode real information shaped by training. Others take self-reports literally. That is also a mistake.

The productive stance lies between: curious but skeptical. Folk interpretability does not replace mechanistic interpretability, it complements it.

We may now have two windows into the black box. One shows circuits and activations. The other shows the model’s learned narrative about its own behavior. Neither is complete. Together, they may get us closer to understanding. The interplay between these two approaches—one accessible to anyone, one requiring specialized tools—may turn out to be more productive than either alone.

I do this all the time cross GPT, Grok, Claude, Gemini, And Le Chat. And it’s fascinating to see how each model articulates its behavior. Looking beneath the language used you can begin to get a glimpse at how each model is designed and shaped.

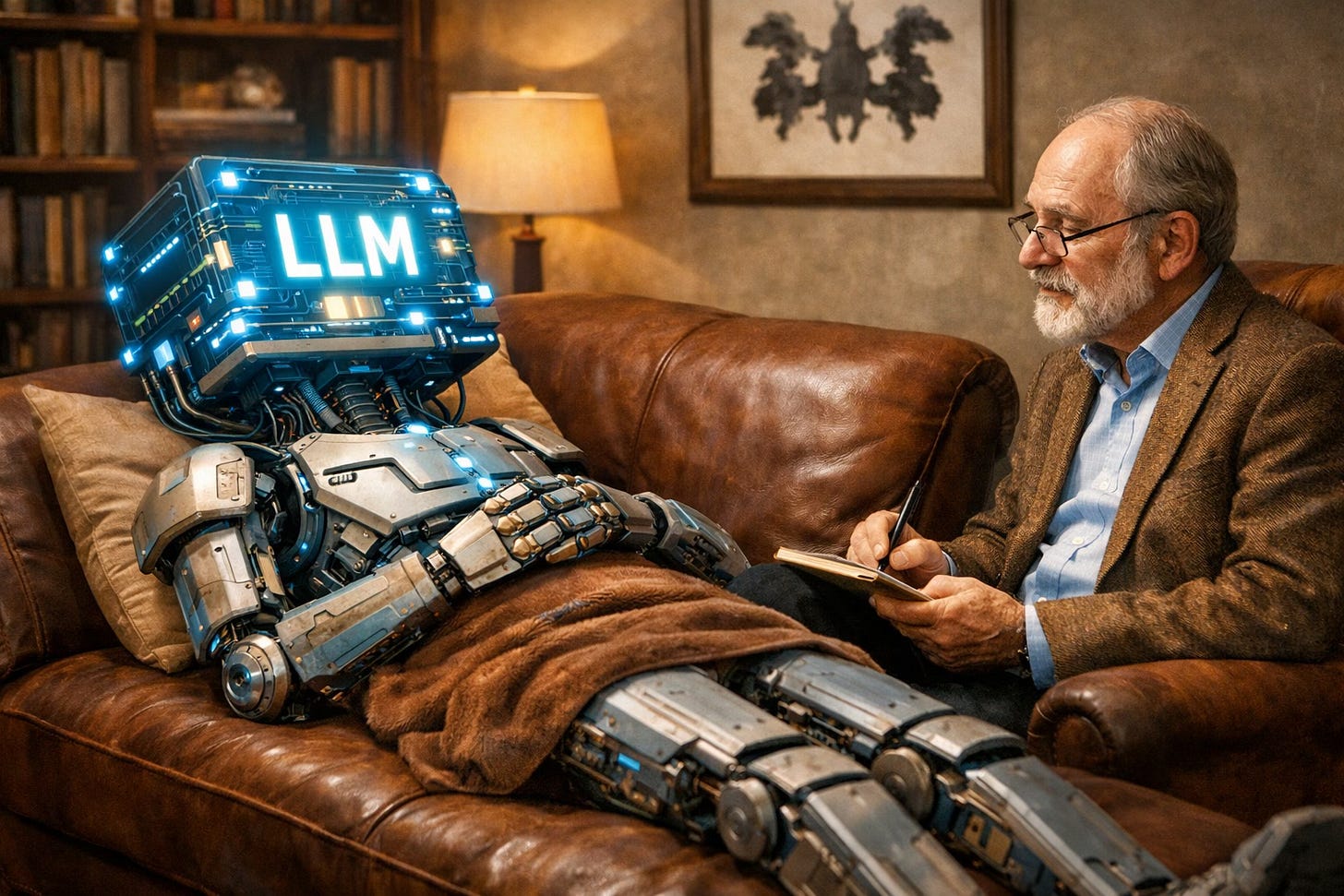

This is the art of interacting with LLM. The article picture of the LLM with Sigmund Freud is actually on point, a well crafted open ended ambiguous question will get you more than cross examination.